Portfolio for Boston Dynamics, Robotics Software Engineer, Stretch

(A full-stack-focused portfolio is available here)

I’ve spent nearly a decade building robots that work in the real world— not just in simulation, not just in the lab, but deployed in parks, on public roads, and in active research environments. The common thread across all of my work is integration: making perception, planning, control, and hardware play nicely together on complex platforms where failure isn’t an option.

That’s what excites me about Stretch. Warehouse environments are messy, dynamic, and unforgiving, exactly the kind of place where robust behavior planning and careful systems integration make the difference between a demo and a product.

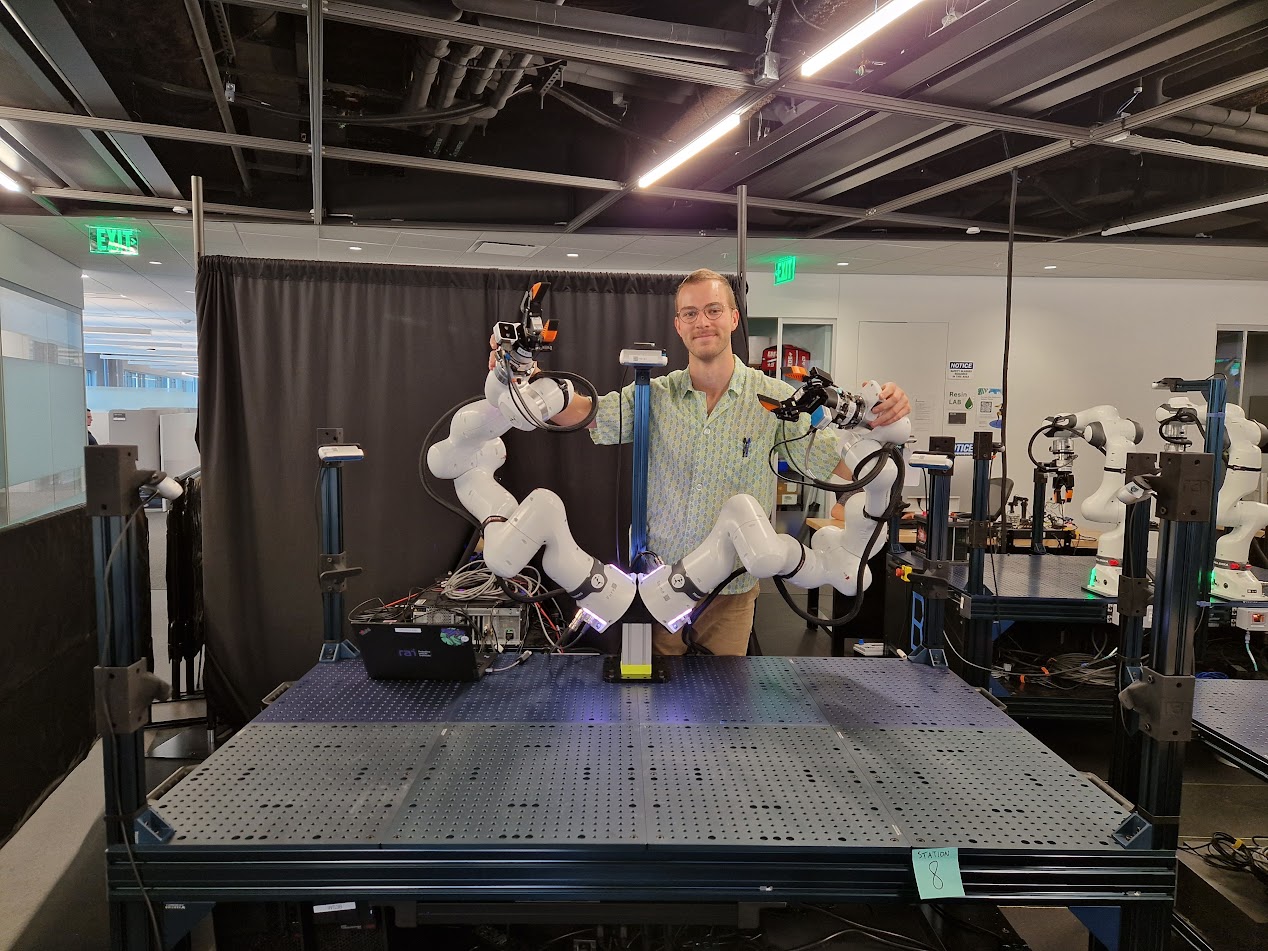

Manipulation engineering at RAI Institute (2025 — Present)

Since June 2025, I’ve served as the operations engineer of a manipulation lab with 24+ Franka robot arms supporting five research groups. While much of my current role is more infrastructure-focused, it keeps me sharp on the integration and debugging skills that matter for Stretch. I’ve also developed novel hardware and software tools that researchers have come to depend on.

Force-aware teleoperation device

I developed a novel force-aware leader arm teleoperation device with gravity compensation, force feedback, and programmatic friction compensation. It’s a 7 DOF leader arm that feels effortless to move, running through a high-frequency controller with optimized inverse dynamics calculations. We’re in the process of open-sourcing the hardware and software. It’s now used by nearly every manipulation research group at RAI.

Central diagnostics stack

And I built the lab’s core diagnostics system: decentralized agents across a dozen machines, a full-stack web dashboard with interactive 3D visuals, and centralized logging — the kind of tooling that lets you trace a vague hardware symptom to a specific subsystem quickly.

Cleaning up our operations

I spearheaded a massive standardization initiative that unified several distinct research testbeds, each with their own manipulators, sensors, compute, and networking, into a powerful, clearly defined standard. This meant gathering feedback from stakeholders across five research groups, who all share the same testbeds.

Reforestation robot at CMU (2023 — 2025)

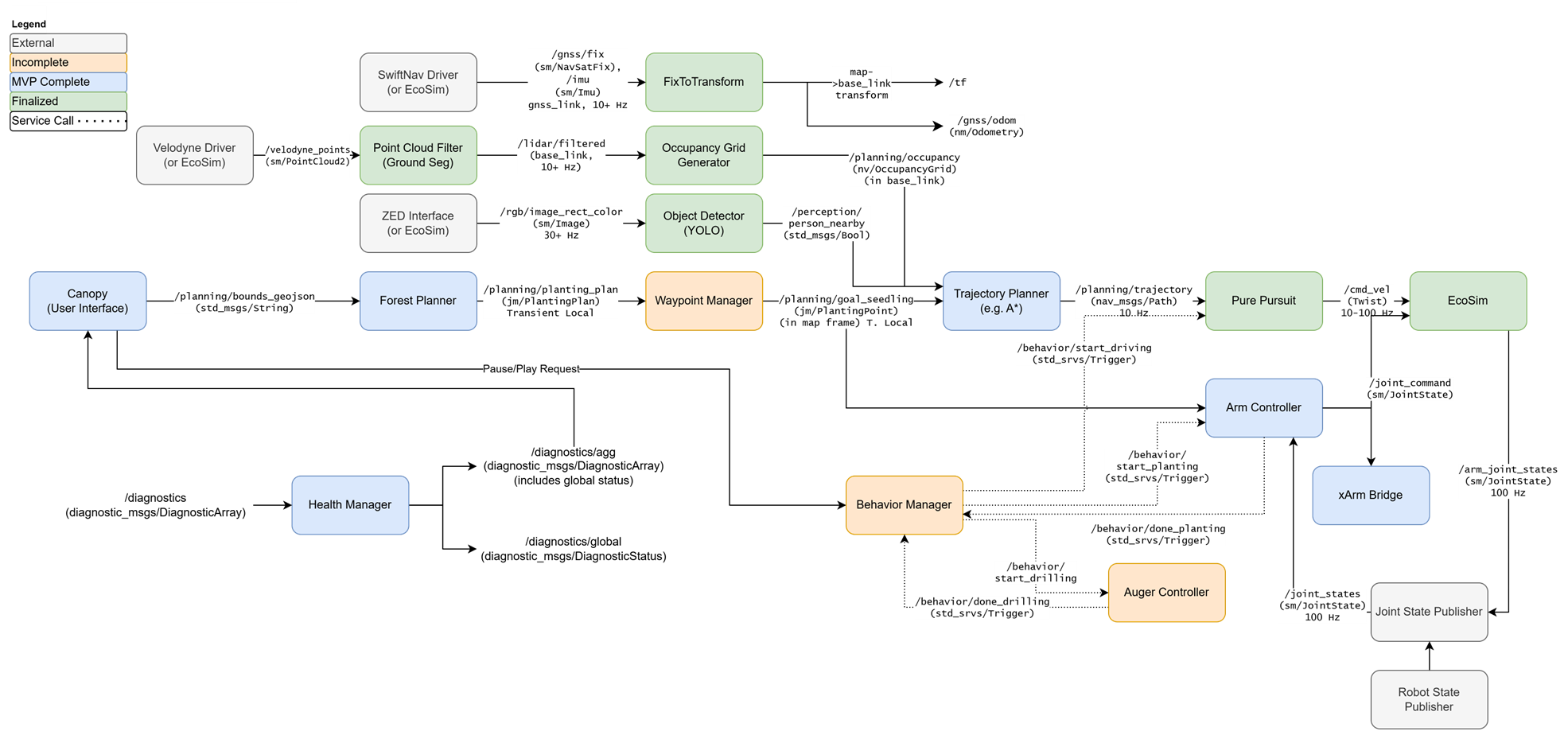

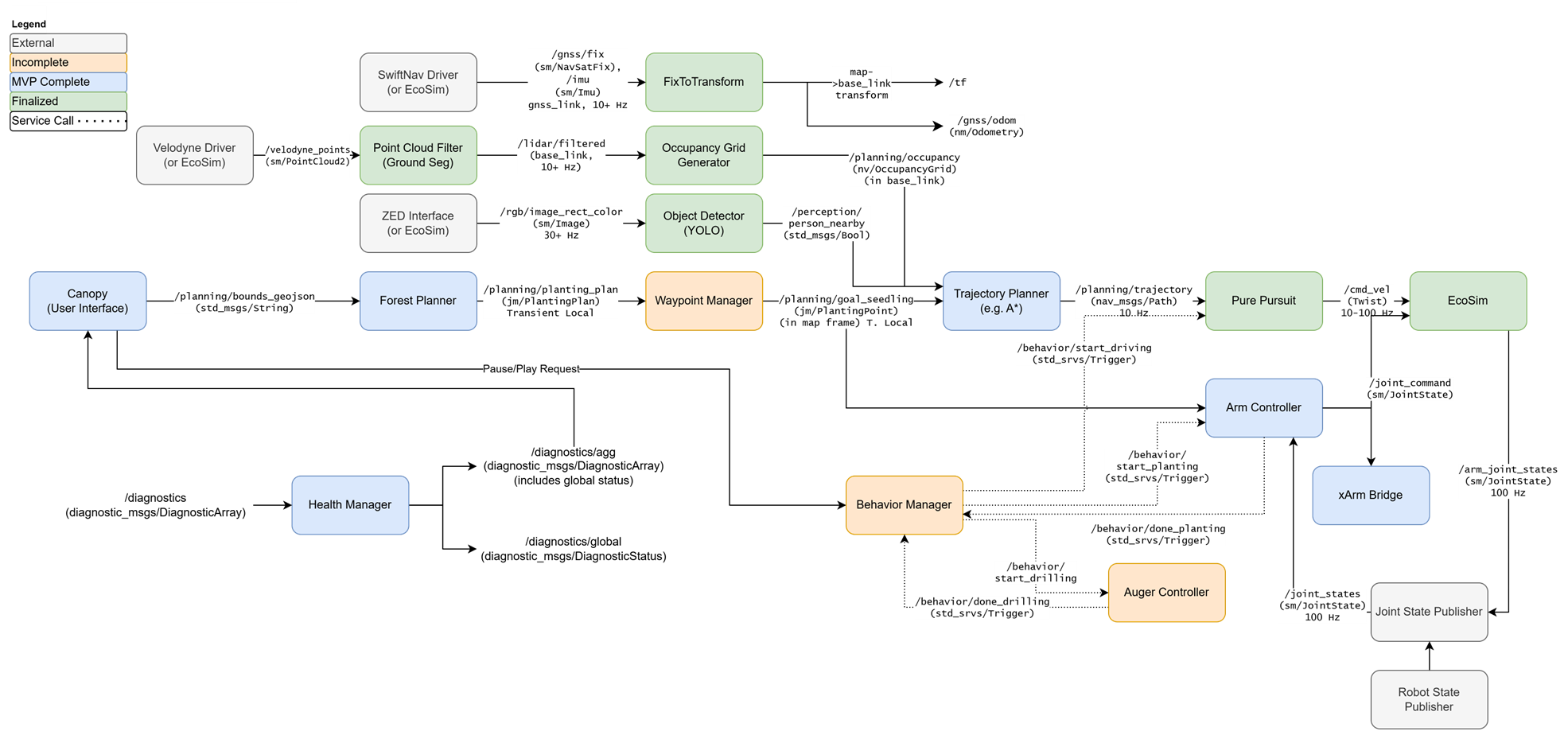

From 2023–2025, I served as project manager and software lead for an award-winning reforestation robot in the Kantor Lab at CMU’s Robotics Institute. I architected and implemented the complete robotics software stack in ROS2, spanning perception, behavior planning, navigation, manipulation, and ecological modeling.

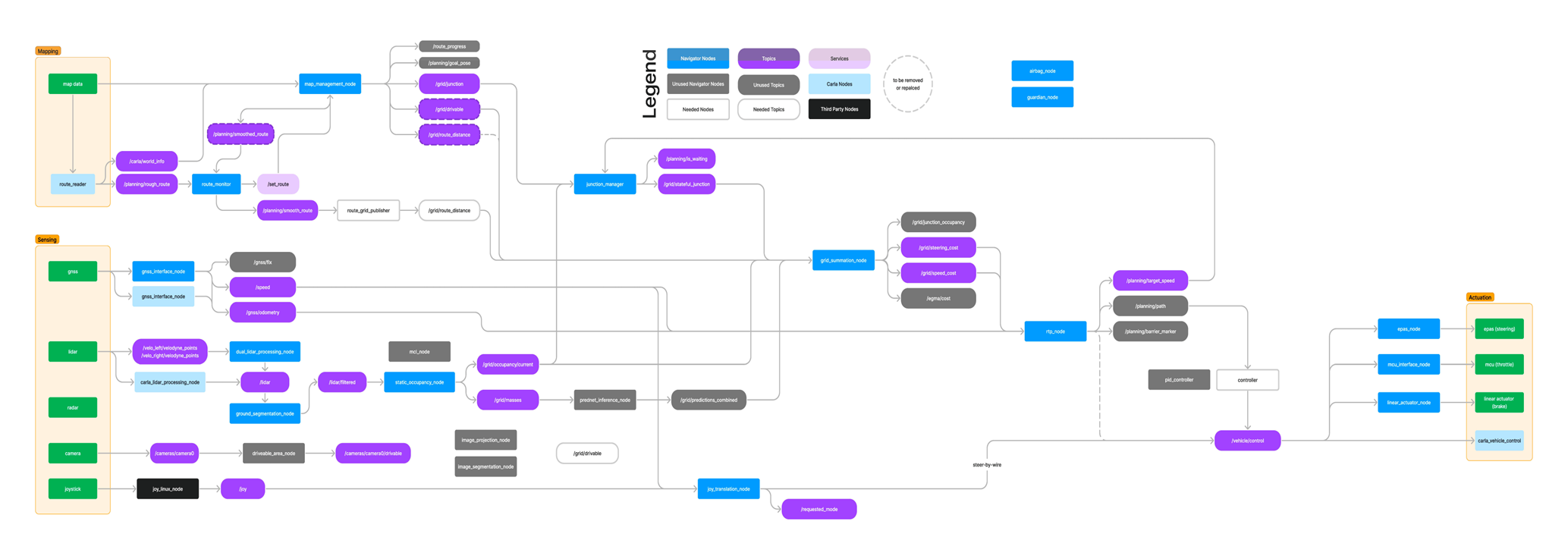

The software diagram for Jonny, the second reforestation robot that I helped build at CMU. It includes the Behavior Manager (FSM), Forest Planner and Waypoint Manager (high-level waypoint generation), Trajectory Planner (using costmaps), and local control using Pure Pursuit.

Behavior trees & task planning

The robot’s autonomy was built on behavior trees that coordinated a complex task sequence: navigate to a planting site, align with the GPS waypoint, lower the auger, drill, deposit a seedling, and move on — while handling faults like soil obstructions, GPS drift, and mechanical jams. The behavior system managed transitions between autonomous planting, assisted teleoperation, and manual override.

Manipulation & grasping

The planting mechanism required precise coordination between a robotic arm and a custom auger. I developed the control sequencing for the manipulation pipeline, including force-based detection of soil contact and obstruction handling.

Our second reforestation robot, Johnny, drills a hole and plants a seed using an xArm with a custom, low-cost dual end effector that I designed. The planting sequence is guided by an FSM. The drilled hole is aligned with the grasper using an eye-in-hand stereo camera

Navigation & localization

I implemented GPS-guided waypoint navigation with local obstacle avoidance. The system operated in unstructured outdoor terrain — grass, slopes, tree roots — where clean odometry is a luxury.

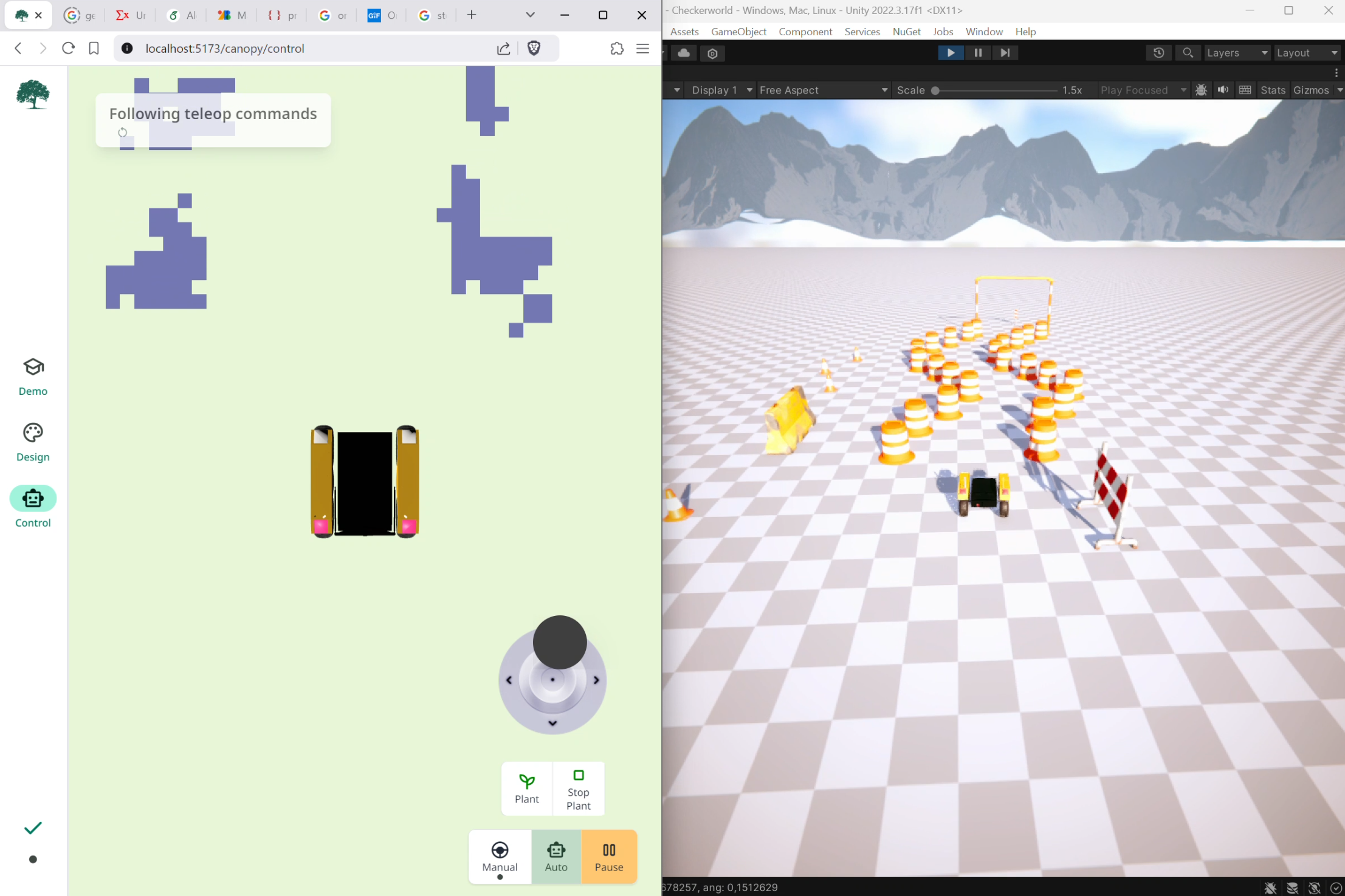

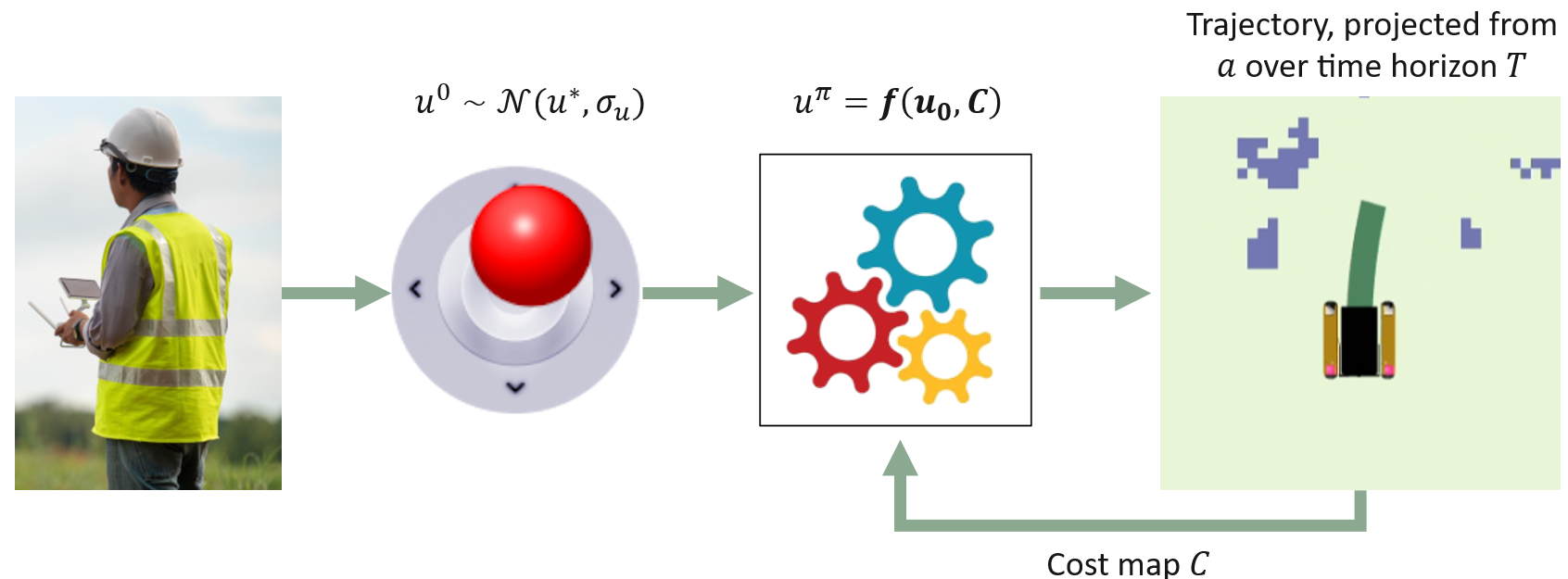

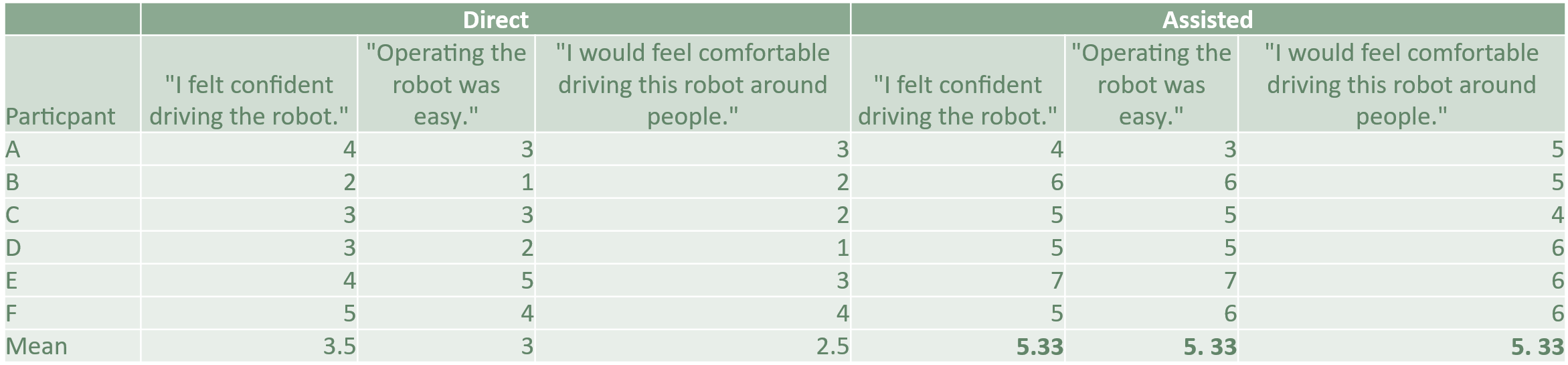

Assisted teleoperation

I implemented a hybrid control scheme for teleop in the field, and validated the scheme with a user study. The method blends direct joystick inputs, which I assume to be noisy, with fused perception data structured as a costmap, to produce adjusted teleop commands guaranteed to be safe.

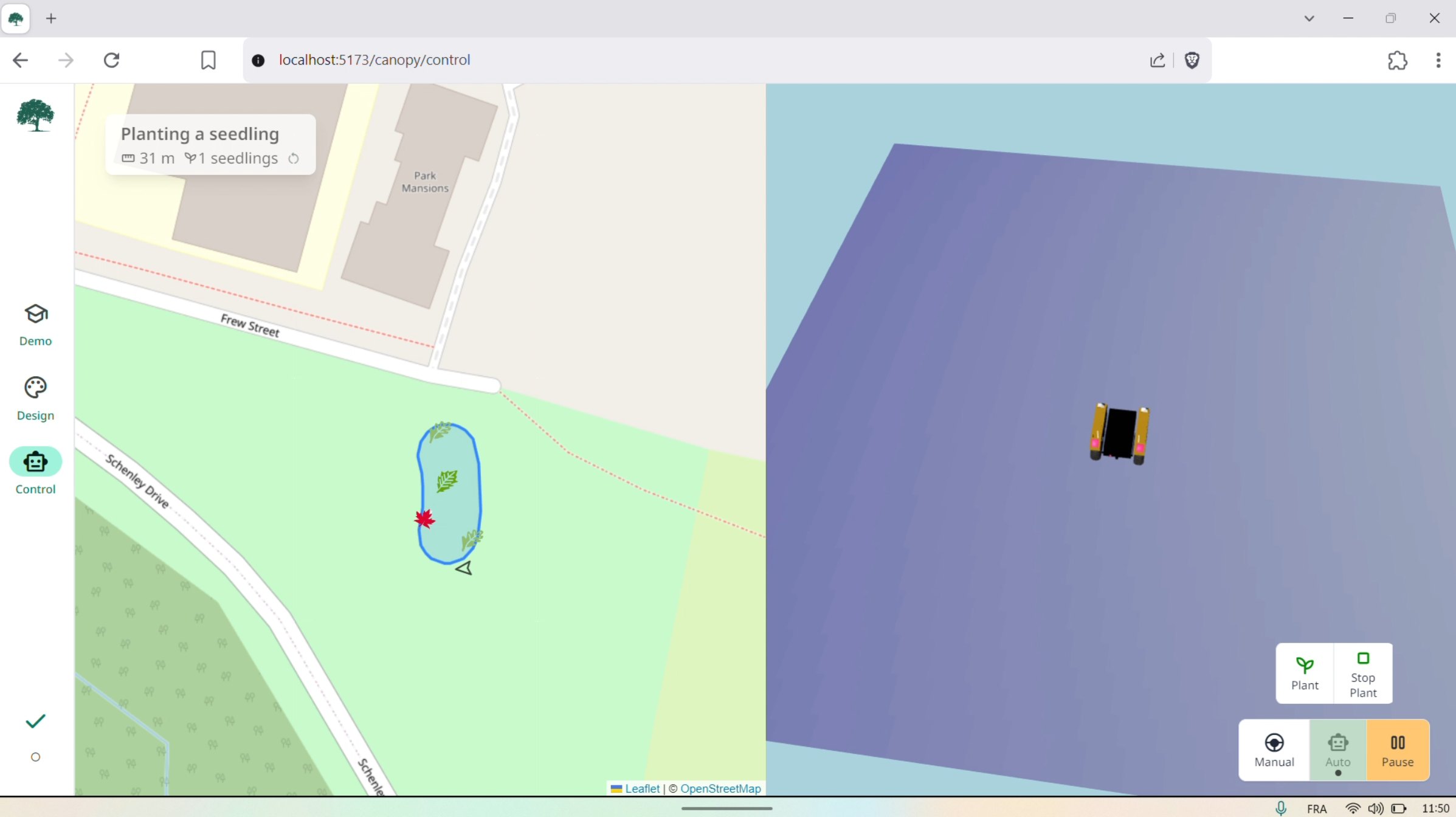

Early tests with my custom-built full-stack control dashboard (left) and my custom-built, Unity-based simulator (right).

Simulation & HIL testing

I developed Ecosim, a custom photorealistic farm robotics simulator in Unity that served as a drop-in replacement for hardware telemetry and actuation. This enabled hardware-in-the-loop testing and validation without needing the physical robot, which was critical for rapid iteration on behavior logic.

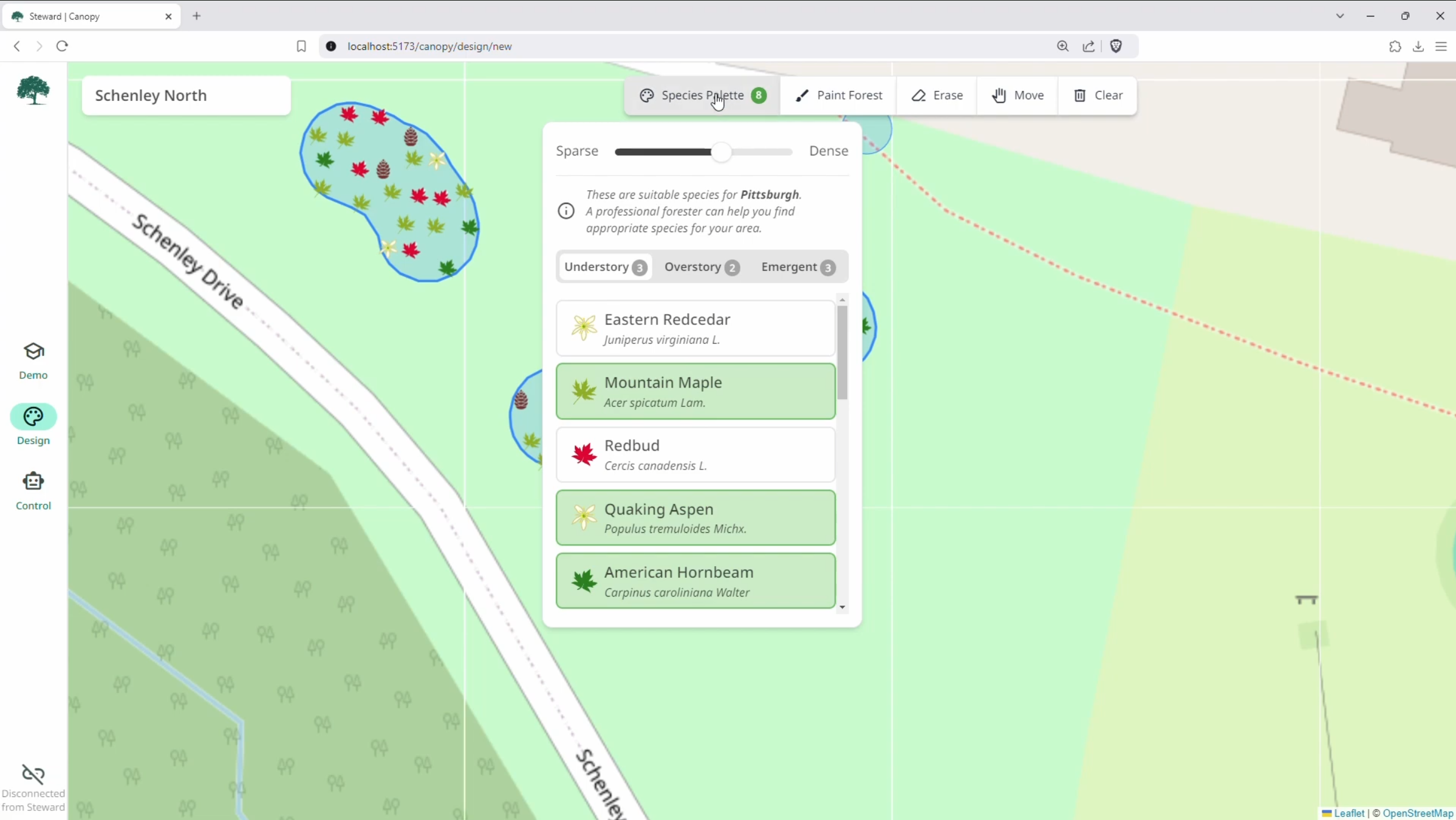

Canopy is a full-stack forest planner. Users select the trees they'd like to plant-- guided by climate-appropriate recommendations-- and simply paint them onto the map. The precise species mix and distribution is determined by an RL policy that I developed in the backend and trained on a forest growth model, with near-instant inference times. Planning a forest is as simple as finger painting.

Results

The robot won the Excellence in Regenerative Agriculture award at the 2025 Farm Robotics Competition, the largest competition of its kind in the world, and was invited to demo at FIRA 2025. We deployed the robot for a public planting project in Schenley Park in partnership with state and local officials.

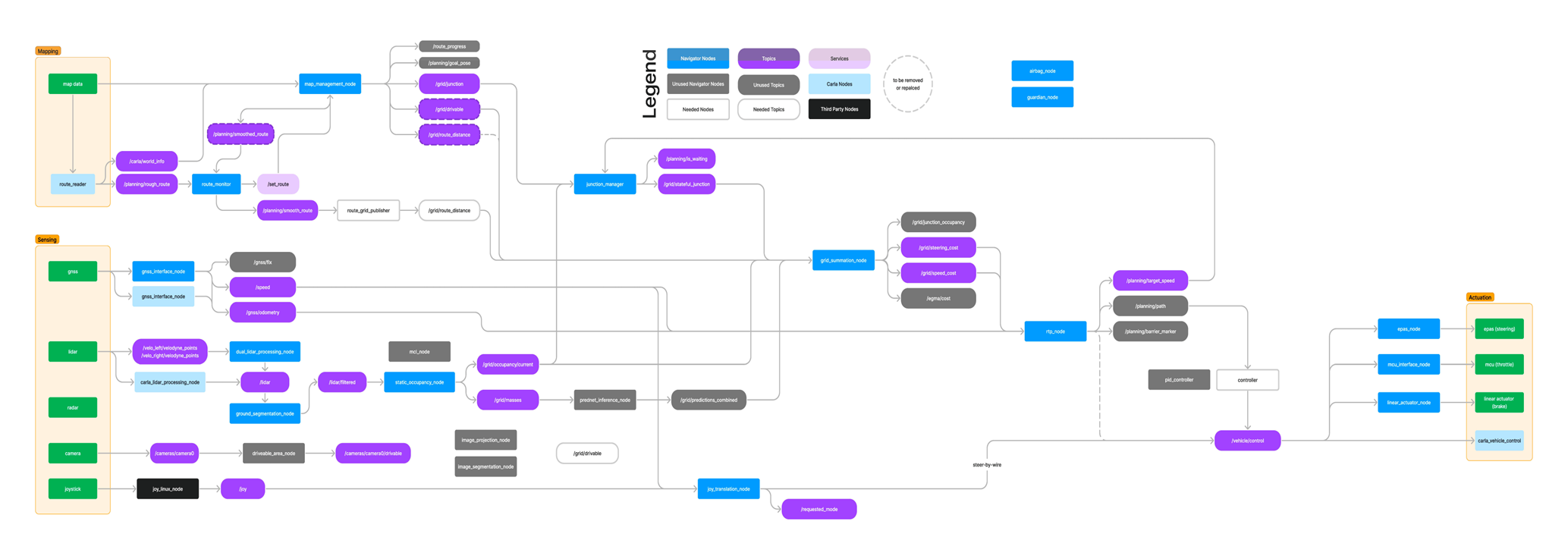

Autonomous driving platform at UT Dallas (2019 — 2023)

From 2019–2023, I founded and led an autonomous driving research project at UT Dallas that culminated in a publicly deployed self-driving car. I served as the project’s lead and software architect, and this is where I cut my teeth as a robotics generalist.

Behavior planning & task management

I designed and implemented the vehicle’s behavior management system in C++, using finite state machines to coordinate driving modes, safety states, and fault recovery. The system handled transitions between autonomous driving, manual override, emergency stops, and degraded-capability modes— all with hard real-time constraints because a 2-ton vehicle doesn’t wait for your code to catch up.

Navigation & path planning

I implemented the full navigation stack: an HD (semantic) mapping system for route-level planning, and local planners using A*, RRT*, and lattice-based approaches for real-time trajectory generation. Route candidates and trajectories were visualized in real time through a custom dashboard (three.js) mounted on the vehicle’s touchscreen.

Perception, localization, and mapping

I implemented and tuned SLAM algorithms using GTSAM, NDT, and KISS-ICP. I built camera and LiDAR sensor fusion pipelines, including octree-based spatial indexing and planar segmentation with RANSAC for ground filtering. Point cloud and occupancy grid data fed directly into the planning stack. I implemented an Extended Kalman Filter that fused RTK data with wheel odometry and IMU measurements to provide centimeter-level accuracy, even when GPS signal was obstructed.

Low-level systems & hardware integration

I wrote low-level C++ interfaces to safety-critical system, including to CAN buses for direct control of the vehicle’s pedals and steering. I built high-performance sensor filters and developed custom binary protocols for streaming LiDAR packets over WiFi in real time.

Debugging complex cyberphysical systems

Deploying a self-driving car on public roads means debugging at every layer of the stack. I’ve profiled rendering pipelines in a custom-built simulator, traced faulty terminating resistors in CAN networks with a multimeter and oscilloscope, and diagnosed timing issues across distributed ROS nodes. When something goes wrong at 30 mph, you learn to be systematic.

Technical depth

C++: Safety-critical CAN bus interfaces, high-performance sensor filters, behavior state machines, firmware for sensors and actuators. I’m comfortable working day-to-day in a C++ codebase.

Robotics generalist: Behavior trees and FSMs, grasping and manipulation, navigation and path planning (A*, RRT*, lattice planners), SLAM and localization (GTSAM, NDT, KISS-ICP), perception and sensor fusion (camera, LiDAR, octrees, RANSAC), hardware debugging with oscilloscopes and multimeters.

Integration: I’ve built systems where perception, planning, control, and hardware all have to work together in real time — on a self-driving car, on a field robot, and across a fleet of 24 research arms. I enjoy the puzzle of making it all fit.

ROS/ROS2: Used extensively across both the reforestation project and lab operations at RAI. Docker, Bazel, and CI/CD for deployment.

Python: Web servers, SLAM implementations, CNNs, automation tooling. I reach for Python when C++ isn’t needed and when rapid iteration matters.

The software diagram for Jonny, the second reforestation robot that I helped build at CMU.